KSA Cybersecurity Awareness Survey

Region:Middle East

Author(s):Geetanshi Chugh

Product Code:KR1545

October 2025

90

About the Report

Survey Overview

Ken Research executed the KSA Cybersecurity Survey, targeting IT and technical software professionals. Respondents were screened to an expected ~10–12% incidence and completed a structured online questionnaire under voluntary, anonymous participation—ensuring role-fit across small, mid-sized, and large organizations.

Fieldwork ran December 2024–January 2025. After rigorous noise-filtration and quality checks, N=300 validated responses were analyzed, yielding clear trends, gaps, and actionable insights on cybersecurity awareness and behaviors to inform training, policy, and investment decisions.

Survey Analysis

General Cybersecurity Awareness

Staff awareness

Cybersecurity awareness clearly improves with organization size. Smaller firms cluster at the low end of the awareness spectrum, mid-sized companies mostly sit in the “moderate” band, and large enterprises show the highest shares of “high” and “very high.” The pattern suggests that scale brings more structured policies, training cadence, and leadership attention to security behavior.

Incidents in the last 12 months

Smaller organizations mostly report only a handful of phishing incidents each month—either because detection/reporting is weak or exposure is genuinely lower—while midsize and large firms acknowledge more activity. Across the sample, a sizeable minority still self-rates awareness as “low/very low,” underscoring the need for cross-sector nudges on reporting discipline and basic hygiene.

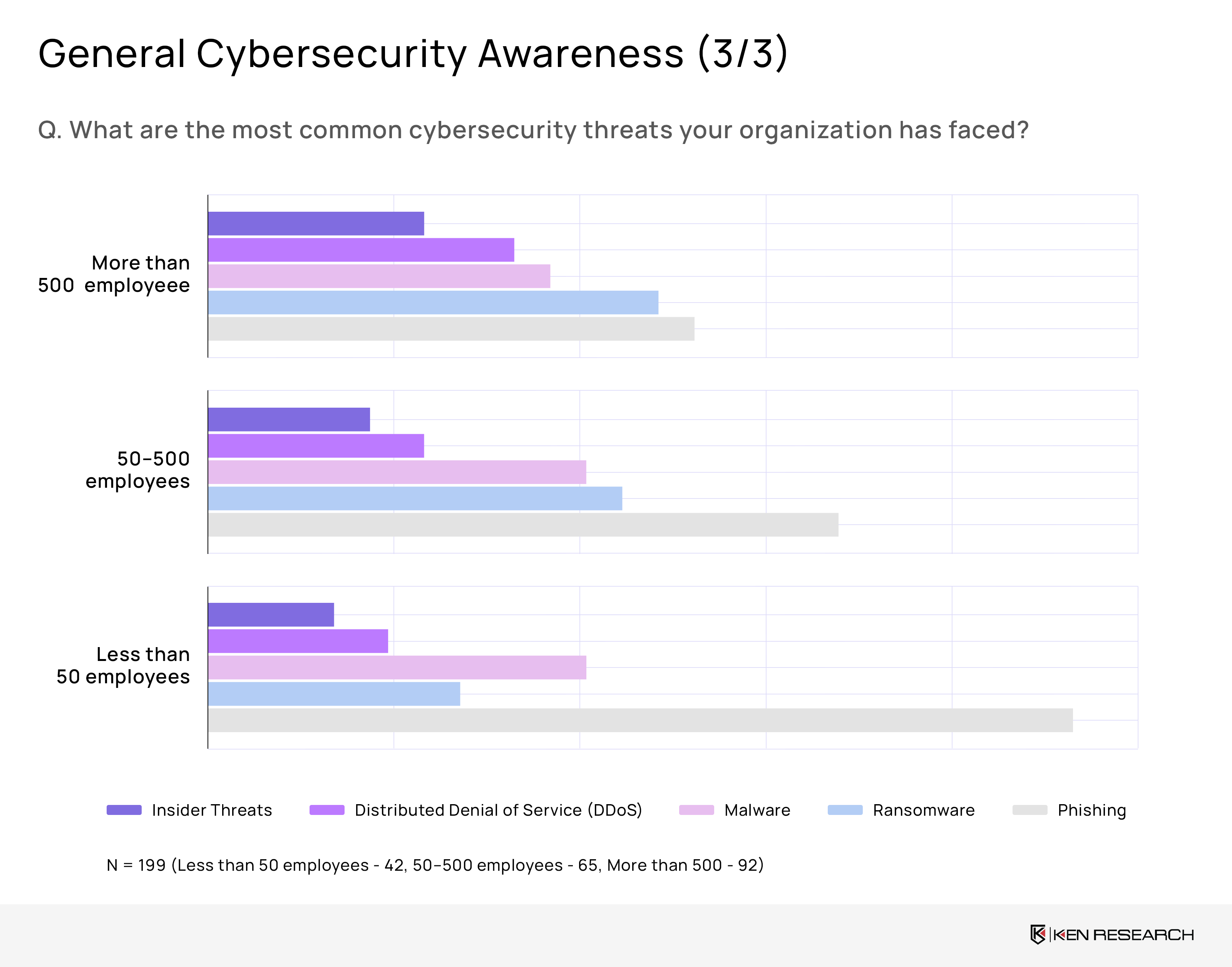

Common threats

Phishing dominates, with roughly 223 instances logged across company sizes, signaling the need for stronger email defenses and user training. Ransomware is the runner-up and bites hardest in big firms, with about 85 cases among organizations with more than 500 employees—pointing to gaps in endpoint protection and backup rigor at scale.

Phishing Awareness

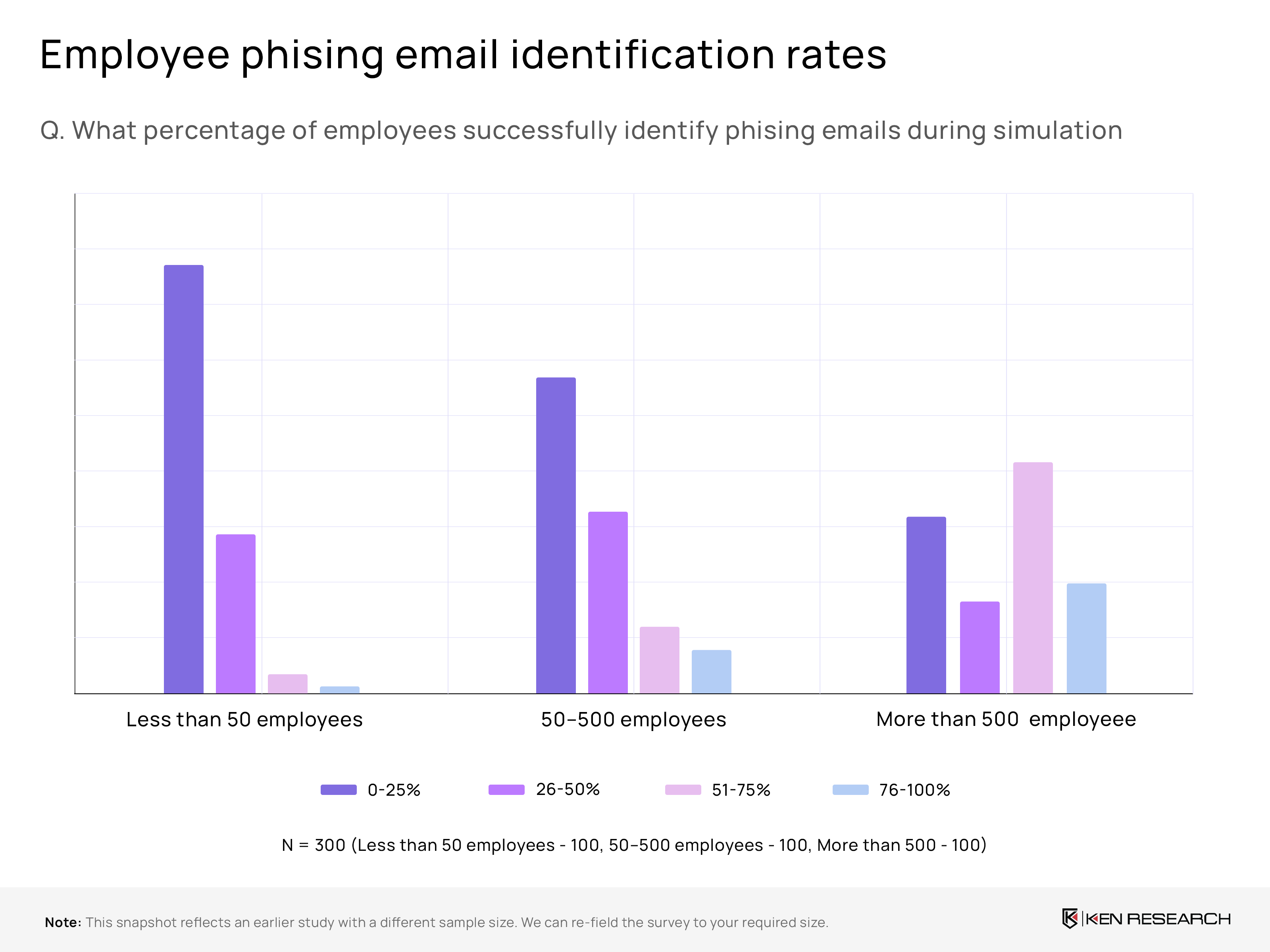

Phishing identification rates

In very small firms, phishing detection is weakest: roughly 70% of employees sit in the 0–25% correct-identification band, signaling thin training. Performance rises with scale; large enterprises typically enable about half to nearly three-fifths of staff to spot attempts, aided by structured simulations, centralized tooling, and recurring coaching rhythms that institutionalize response.

Policy for reporting phishing

Reporting discipline strengthens with organizational maturity. Many small businesses still lack a formal escalation path, creating blind spots and slower containment. Mid-market firms increasingly codify procedures into handbooks and ticketing workflows, while large organizations embed reporting into onboarding, automation, and manager reminders—shortening response cycles and enabling trend analysis that directs targeted awareness interventions where lapses persist.

Monthly phishing reports

Reported volumes mirror detection culture more than absolute risk. Very small companies rarely log many incidents, reflecting under-detection or hesitancy to escalate. Mid-sized organizations show a healthier spread as controls and norms mature. The largest enterprises report the most, driven by stronger monitoring, frequent simulations, and a no-blame culture that encourages immediate employee escalation and disciplined follow-through.

Ransomware

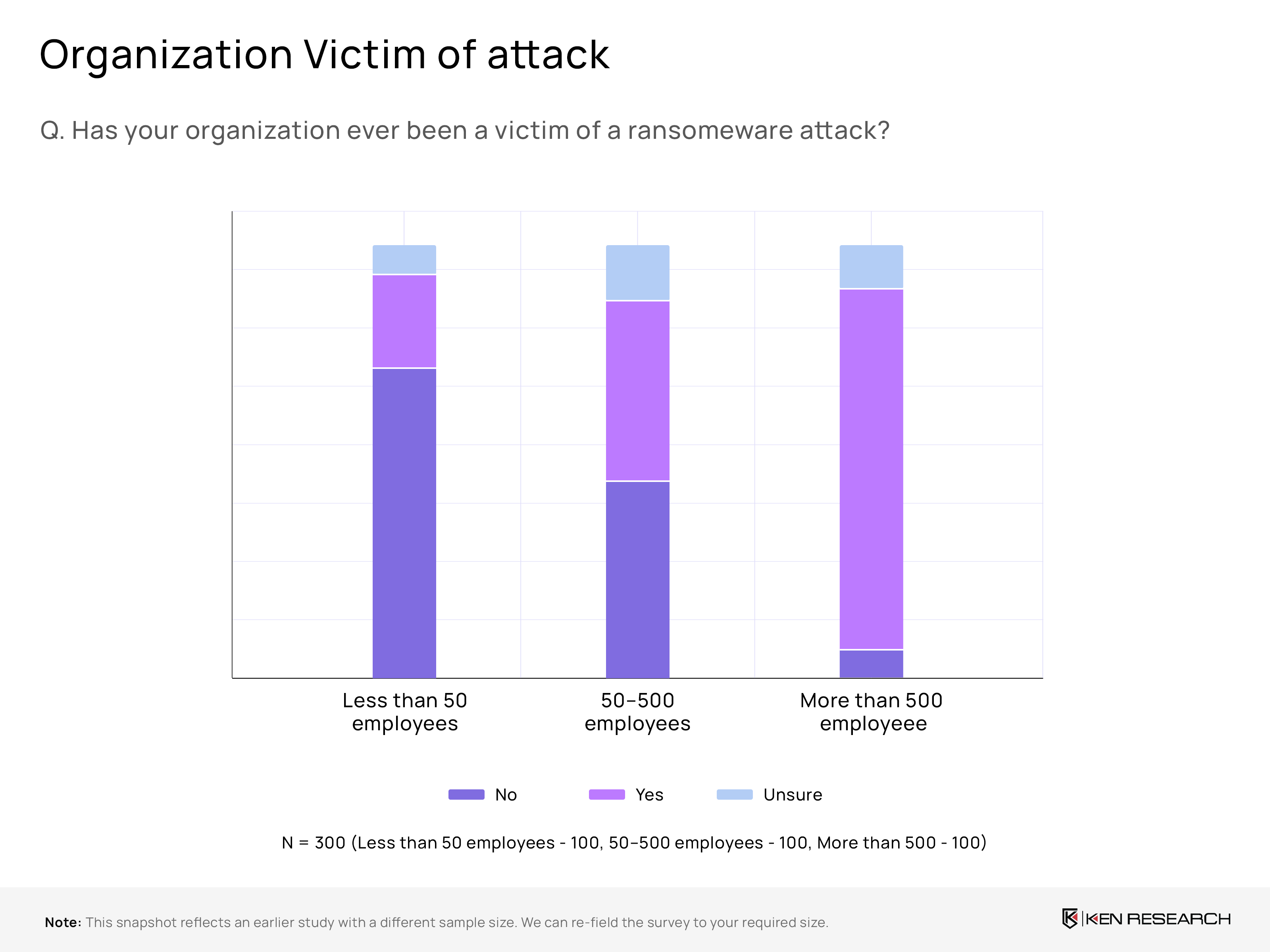

Ransomware exposure

Large enterprises appear prime targets: roughly 89% of organizations with >500 employees report having been victims of ransomware, versus about 45% among mid-sized firms (50–500). Smaller firms (<50) report far fewer incidents, but the mix suggests both scale and data value make bigger companies disproportionately attractive to attackers.

Readiness gap: response & recovery planning

Preparedness is uneven. Many organizations—especially small and mid-sized—still lack formal ransomware playbooks, coordinated roles, or tested escalation paths. By contrast, large enterprises typically institutionalize plans, tabletop drills, and vendor contracts, shortening decision cycles and enabling faster containment, forensics, and restoration when breaches occur.

Recovery confidence and resilience

Confidence in recovering data without paying ransom tracks maturity. Smaller firms commonly express low assurance, reflecting limited backups, fragmented tooling, and scarce expertise. Mid-sized organizations sit at moderate or mixed confidence. Larger enterprises report moderate-to-high confidence, supported by layered backups, immutable storage, and regularly validated disaster-recovery procedures.

AI in Cybersecurity

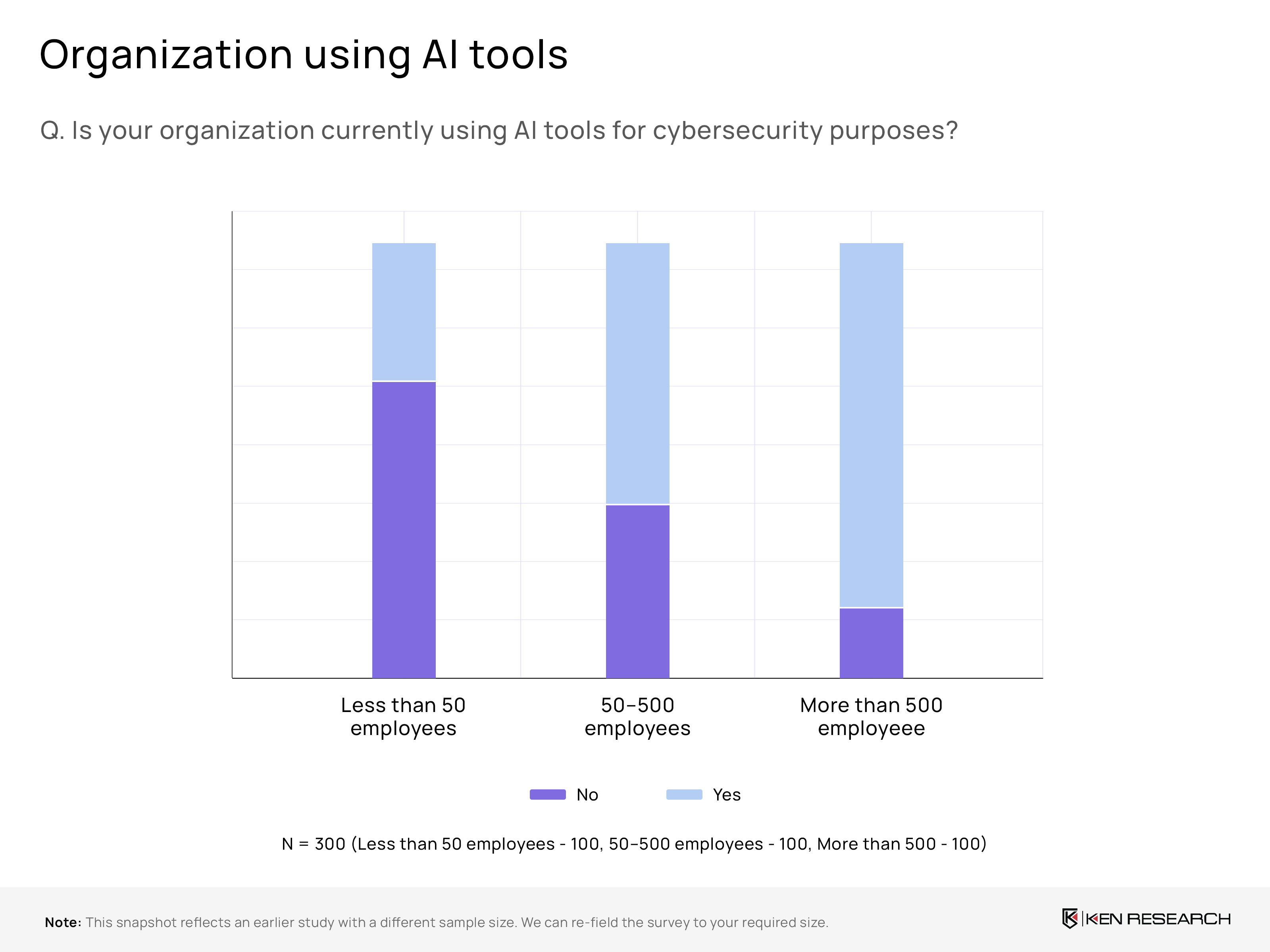

AI adoption level

AI is becoming mainstream in cybersecurity, with around 59% of surveyed organizations using AI tools. Uptake rises sharply with scale—nearly 84% of large enterprises report deployment, versus far fewer small firms—indicating budget, data availability, and tooling maturity concentrate in bigger setups, widening the capability gap unless SMB-friendly solutions emerge.

Where AI helps most

Where AI adds most value is improving people and prevention. Organizations prioritize employee training and awareness as the most effective application, using AI to personalize content, automate phishing simulations, and flag risky behavior. Threat detection and risk analysis follow, with AI surfacing anomalies and exposure paths faster than manual SOC workflows.

Anxiety about adversarial AI

Concern about adversarial AI is highest in large enterprises. Leaders anticipate AI-powered phishing, deepfakes, and automated reconnaissance raising attack speed and credibility, stretching human review. Many are responding with governance guardrails, red-team exercises, and user education, but smaller firms still juggle tooling complexity and budgets, risking slower preparedness against evolving attacker playbooks.

Cybersecurity Skill

In-house Expertise for Threats

Cybersecurity capability concentrates with size. Small firms often lack dedicated specialists and broad coverage, while midsize players are mixed. Large enterprises centralize blue-team functions, run defined playbooks, and embed security in engineering—making them tougher targets but also more process-dependent during fast-moving incidents.

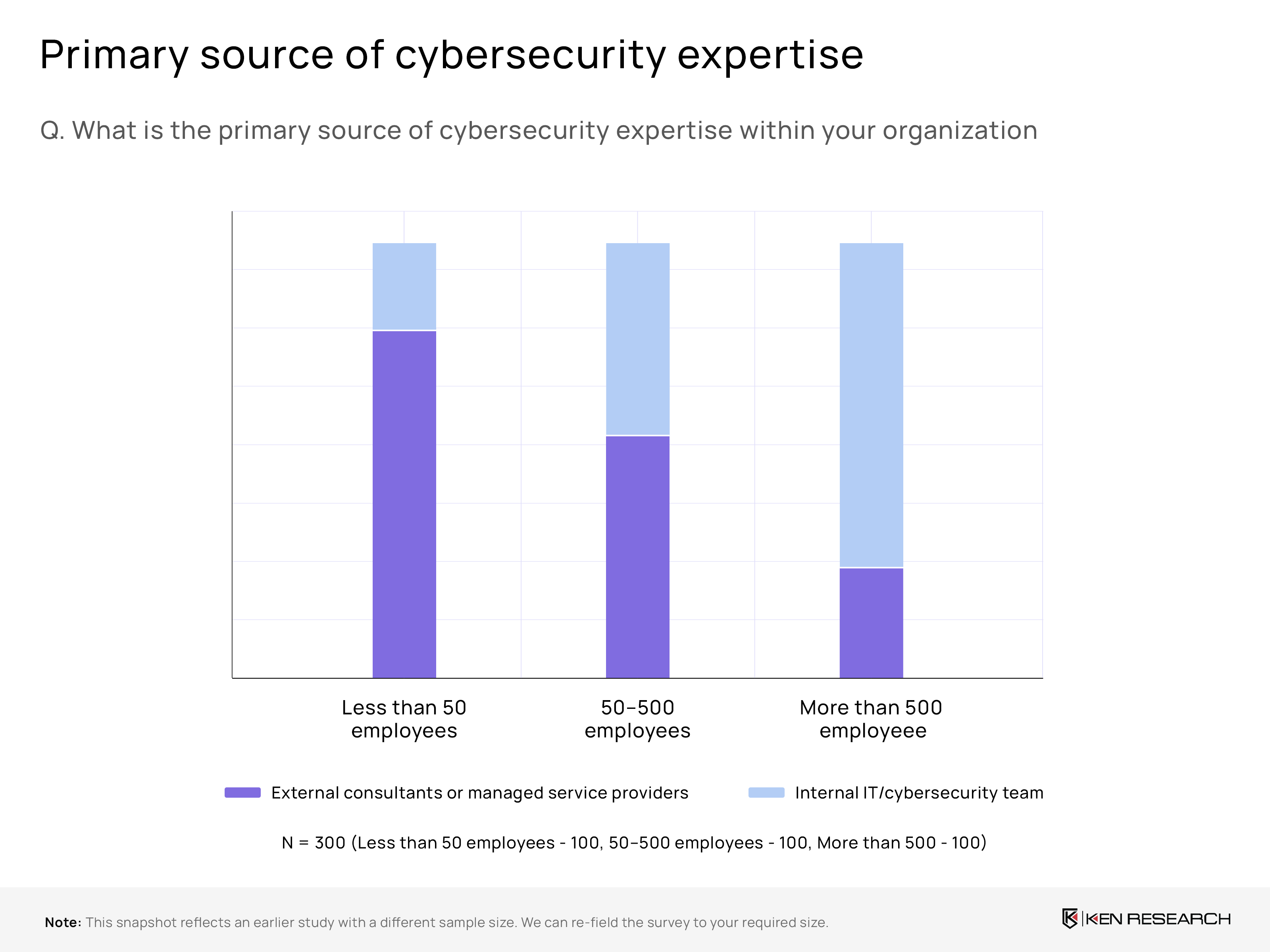

Primary Source of Expertise

Sourcing shifts as organizations grow: around 79% of sub-50 firms rely on external providers versus roughly 24% among 500+ companies. Conversely, internal teams dominate in large enterprises, reflecting bigger budgets, compliance demands, and the need to retain institutional knowledge.

Cybersecurity Training Frequency

Quarterly programs remain rare, with small businesses least likely to train—creating persistent behavior gaps. Midsize and large firms do better but still underinvest in scenario-based refreshers. Without rhythm and reinforcement, phishing resilience, incident handoffs, and zero-day readiness degrade regardless of tooling or headcount.

Most Lacking Security Skills

The hardest capabilities to staff are advanced: AI/ML for threat detection/response, followed by cloud security. Around one-third of respondents highlight AI/ML gaps and roughly one-quarter cite cloud security shortfalls in the 4th image—pointing to fast-evolving stacks and limited practitioner supply that require role-based curricula and selective partner support.

Policy and Investment

Local Data Policy Compliance

Larger organizations lean toward strict local compliance due to audits, operational risk, and sensitive data. Smaller firms prefer flexibility with global data centers, likely for scalability, cost efficiency, and lighter regulatory overheads.

Cybersecurity Budget Allocation

Budgets scale with size: around 70% of large organizations allocate ?13% or more of IT spend to cybersecurity, while roughly 66% of small firms keep it under 10%, signaling potential protection gaps and weaker defenses.

Policy Review Frequency

Most organizations update cybersecurity policies annually, indicating a “once-a-year” rhythm. Smaller companies rely heavily on annual reviews, which may lag fast-moving threats, while larger firms show stronger governance cultures around periodic reviews.

Adoption Challenges

Top hurdles differ by size: around 61% of large enterprises struggle to keep up with evolving threats, whereas mid-sized firms report nearly 40% insufficient expertise. Small firms frequently cite low awareness and budget limits.

Table of Contents

Table of Contents

1. Executive Summary

1.1 Key Objectives of the Survey

1.2 Target Audience and Methodology Overview

1.3 Major Findings and Strategic Implications

2. Survey Overview

2.1 Purpose and Objectives

2.2 Target Respondent Profile

2.3 Screening and Participation Criteria

2.4 Fieldwork Duration and Data Validation

2.5 Analytical Framework

3. Survey Analysis

3.1 General Cybersecurity Awareness

3.1.1 Staff Awareness by Organization Size

3.1.2 Reported Incidents in the Last 12 Months

3.1.3 Common Cyber Threats (Phishing, Ransomware, Others)

3.2 Phishing Awareness

3.2.1 Phishing Identification Rates Across Company Sizes

3.2.2 Policy and Processes for Reporting Phishing

3.2.3 Monthly Phishing Report Trends

3.3 Ransomware Exposure and Resilience

3.3.1 Ransomware Exposure by Company Size

3.3.2 Response and Recovery Planning Gaps

3.3.3 Data Recovery Confidence and Resilience

3.4 AI in Cybersecurity

3.4.1 AI Adoption Levels and Deployment by Organization Size

3.4.2 Key Use Cases and Value Areas

3.4.3 Concerns Around Adversarial AI and Preparedness

3.5 Cybersecurity Skill Landscape

3.5.1 In-house Expertise for Threat Detection and Response

3.5.2 Primary Source of Cybersecurity Expertise (Internal vs External)

3.5.3 Training Frequency and Behavioral Gaps

3.5.4 Most Lacking Security Skills (AI/ML, Cloud Security, etc.)

3.6 Policy and Investment Patterns

3.6.1 Local Data Policy Compliance Practices

3.6.2 Cybersecurity Budget Allocation by Organization Size

3.6.3 Policy Review Frequency and Governance Rhythm

3.6.4 Top Adoption Challenges and Barriers

4. Research Methodology

4.1 Team Training and Engagement Protocols

4.2 Sampling Design and Anonymity Assurance

4.3 Follow-ups for Deep Insights

4.4 Data Quality and Validation Controls

4.5 Analytical Tools and Interpretation Approach

5. Key Insights and Recommendations

5.1 Strategic Gaps Identified

5.2 Sector-Specific Awareness Needs

5.3 Recommended Actions for Policymakers and Enterprises

5.4 Implications for Cybersecurity Vendors and Training Providers

Research Methodology

Research Methodology

-

Team Training & Protocol

Surveyors were trained on engagement protocols for Tech Heads, Managers, IT Admin Heads, CTOs/CIOs, and Digital Heads. They administered the online questionnaire, ensured data accuracy, and clarified cybersecurity topics for a smooth respondent experience.

-

Targeted Sampling & Anonymity

Responses were collected via online platforms from targeted stakeholders with voluntary participation. To keep results unbiased, respondents’ identities were kept anonymous.

-

Follow-ups for Deeper Insight

Participants scoring very low on selected attributes were contacted for brief follow-ups to understand challenges and solutions. They provided 2–3 qualitative or quantitative inputs where scoring alone could not address client-specific criteria.

-

Data Quality Controls

A noise-filtration process monitored response patterns, reviewed time spent per question, and cross-verified inconsistencies. Sampling was evenly split across large, medium, and small entities—100 responses from each group.

-

Analysis & Outputs

Data was analyzed using statistical and qualitative methods to surface trends aligned with research objectives. Findings are intended to support PR initiatives and enhance local sales efforts in Saudi Arabia.

Why Buy From Us?

What makes us stand out is that our consultants follows Robust, Refine and Result (RRR) methodology. i.e. Robust for clear definitions, approaches and sanity checking, Refine for differentiating respondents facts and opinions and Result for presenting data with story

We have set a benchmark in the industry by offering our clients with syndicated and customized market research reports featuring coverage of entire market as well as meticulous research and analyst insights.

While we don't replace traditional research, we flip the method upside down. Our dual approach of Top Bottom & Bottom Top ensures quality deliverable by not just verifying company fundamentals but also looking at the sector and macroeconomic factors.

With one step in the future, our research team constantly tries to show you the bigger picture. We help with some of the tough questions you may encounter along the way: How is the industry positioned? Best marketing channel? KPI's of competitors? By aligning every element, we help maximize success.

Our report gives you instant access to the answers and sources that other companies might choose to hide. We elaborate each steps of research methodology we have used and showcase you the sample size to earn your trust.

If you need any support, we are here! We pride ourselves on universe strength, data quality, and quick, friendly, and professional service.